Published on 09/02/2026

Visibility in ChatGPT & Co: the ultimate guide for your B2B website

Topics

- Solutions

The digital landscape is currently undergoing what is arguably its most significant transformation since the invention of Google: user search behaviour has changed fundamentally – including in the B2B sector.

More and more people are relying on zero-click searches: when looking for solutions or providers, they bypass search engines such as Google and turn directly to AI systems like ChatGPT, Gemini, or Perplexity. And those who continue to use search engines? They increasingly rely on the AI Overview or the Search Generative Experience (SGE), which integrates Generative AI directly into search results.

For companies and their marketing teams, this development does not mean that traditional SEO can be ignored (more on this later), but it does increase the urgency of measures to improve visibility within established Large Language Models (LLMs). The new keyword is "AI visibility" – namely: how likely is it that your brand, web content, and services will be recognised, understood, and subsequently included in responses by generative AI models?

To achieve this, web content must be accessible, perceived as relevant, and classified as trustworthy. Many companies are not prepared for this with their current digital offerings. They risk becoming digitally invisible – and face a significant drop in traffic.

1. How do you ensure that web content is accessible to ChatGPT & Co?

Technical accessibility is ensured by controlling crawler access. The key to this? The robots.txt file. It governs whether indexing by AI-relevant bots – such as OAI-SearchBot, GPTBot, and ChatGPT-User – is possible. It is also important that content is available server-side as HTML, since OpenAI's crawlers cannot execute JavaScript. Anything that is not present as static HTML in the source code remains invisible to AI. Fast loading times are equally important.

Another control mechanism is the LLMS.txt file, with a clearly structured layout and unambiguous, static URLs. It selectively lists URLs and content that are relevant to Large Language Models. However, an LLMS.txt file is no guarantee of good visibility, as some bots reportedly largely ignore it.

2. What can I do to be mentioned more frequently by ChatGPT & Co?

In addition to machine-readable formatting and semantic clarity, AI models evaluate the currency and trustworthiness of content positively. This is comparable to traditional SEO, where Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T for short) have long served as a guiding framework.

To enhance the trustworthiness and credibility of web content, for example, the use of source citations is recommended. An imprint and a privacy policy also provide the necessary transparency. Even stronger signals include mentions in reputable specialist media or on authoritative third-party sites (e.g. Wikipedia), backlinks from trusted domains, and positive mentions on social media, in podcasts, on review platforms, or in forums.

Repurposing content is also beneficial – for instance, covering a topic across podcasts (note: do not forget transcripts), blog articles, and external trade publications. The chances of being cited are further increased by providing exclusive content – above all, the publication of studies or benchmarks based on proprietary surveys, analyses, or data.

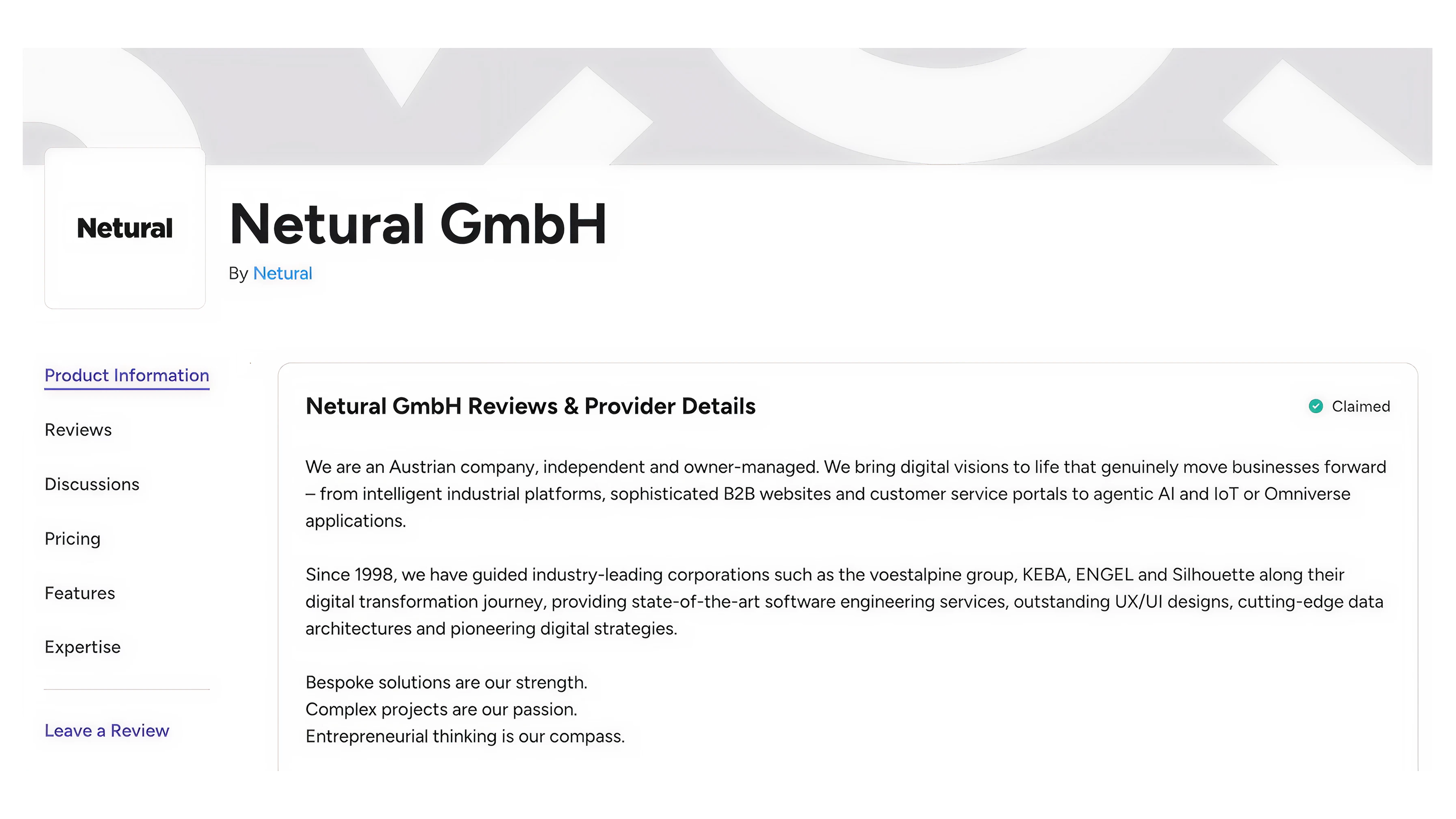

Example of a company profile on a common review platform

3. How do I ensure that my web content is correctly understood and reproduced?

For B2B companies with complex content and offerings, "structured data" is essential. Structured data consists of standardised information embedded into a website's HTML code via special markup languages such as JSON-LD, Microdata, or RDFa. It provides web page content with a clear structure so that search engines, LLMs, and AI bots can better understand context and meaning. The vocabulary of Schema.org – developed by Google, Microsoft, Yahoo, and Yandex – has established itself as the standard for this purpose.

To ensure that content – for example in a company blog – is citable, it should be divided into concise, logically structured sections, each addressing one key point. This structure makes it easier for LLMs to capture and process content. Short summaries, tabular comparisons, and a question-and-answer format also make it easier for LLMs to extract information. The wording of content, including the formulation of questions, should mirror the language of the target audience (i.e. AI users) as closely as possible. For images, alt tags and image descriptions should not be overlooked.

4. How can I measure my brand's and content's AI visibility?

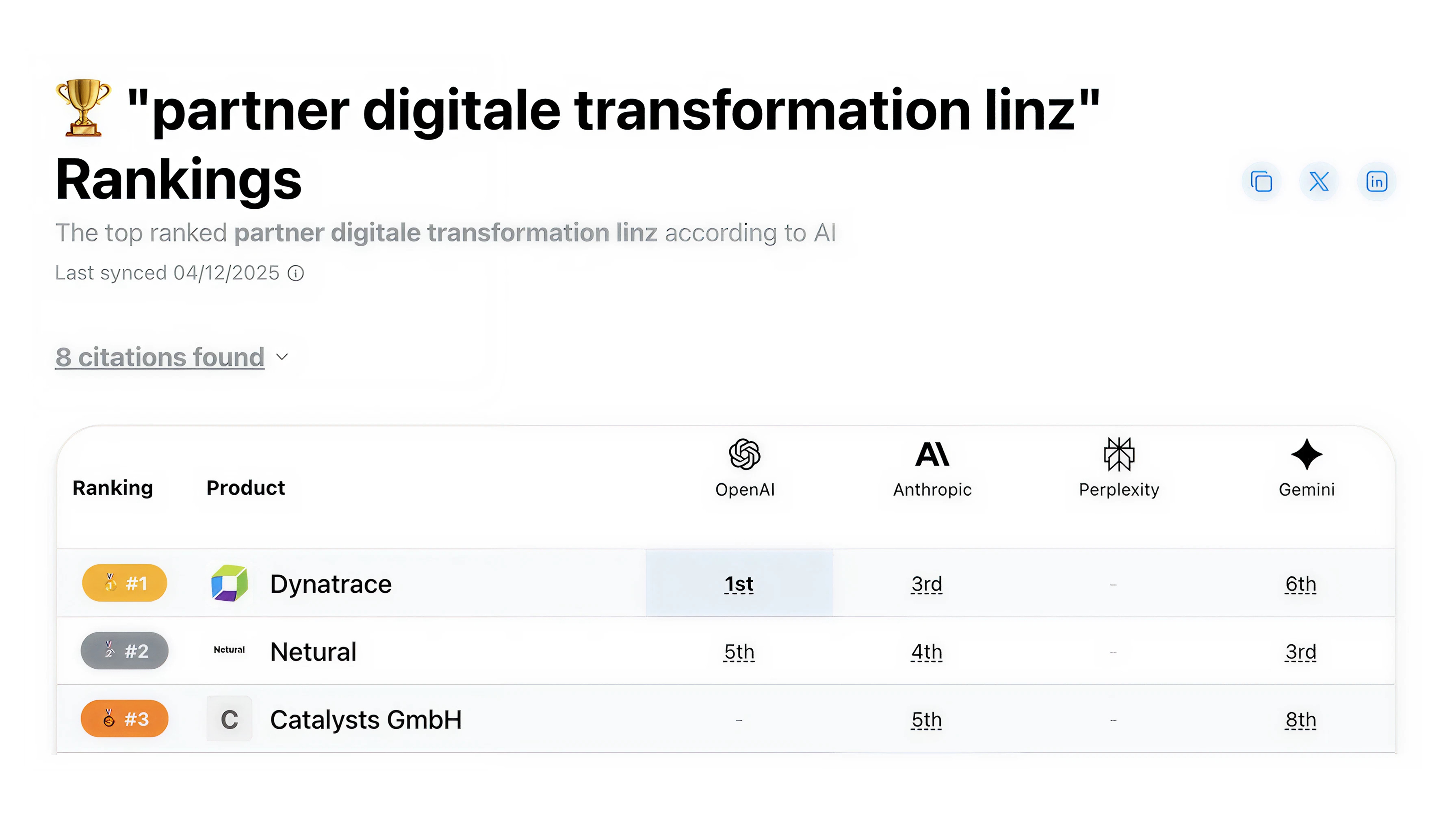

To measure the success of GEO (Generative Engine Optimisation) or LLMO (Large Language Model Optimisation) efforts, the use of specialised AI search monitoring tools is recommended – such as Peec AI, OtterlyAI, or the Agent Analytics from Profound. productrank.ai is a good starting point to initially check whether and how you rank for certain queries in ChatGPT, Anthropic, Perplexity, and Gemini. The list of citations also helps identify potential third-party sites for media partnerships.

Ranking of Netural for the search term "Partner Digitale Transformation Linz"

Depending on which tools you use, you can regularly measure how your visibility across various AI chats develops compared to competitors, which sources are being cited, and even which questions your target audience is posing to AI.

What is the best approach to increasing my visibility in ChatGPT & Co?

Determine the current status quo

Establish the technical foundation (structured data, robots.txt, etc.)

Identify relevant topics and questions of the target audience

Create current, relevant content with an appropriate structure

Identify authoritative third-party sites

Build an external presence (create profiles and invest in PR)

Establish ongoing monitoring

Note: We ourselves are currently going through this process for the relaunch of our website netural.com. Our learnings are being applied directly to our client projects.

What should I absolutely avoid?

There is no way around GEO: there are no signs that ChatGPT & Co are losing relevance. GEO therefore cannot be ignored. At the same time, GEO does not mean that content should henceforth be written and optimised exclusively for AI. People – and the target audience – must remain the focus in order to guarantee content relevance. Furthermore, one should not rely solely on one's own website, and paywalls or download forms for important content should be critically reconsidered.

With regard to SEO: SEO and GEO complement one another. Traditional SEO should not be neglected, as it remains important for indexing and discoverability. What many also do not know is that search results in ChatGPT are partly influenced by the Bing Search Index. This means that visibility on Bing must not be overlooked. An important step towards achieving this is setting up Bing Webmaster Tools. Bing Webmaster Tools (Bing WMT) are to Bing what Google Search Console is to Google: a free service that allows websites to be submitted to the Bing crawler so that they appear in the search engine. IndexNow pings help to index content more quickly.

What support does Netural offer in the area of GEO and LLMO?

GEO has moved from being an option to an obligation. We help our clients meet this obligation. Whilst we do not offer GEO or LLMO services in isolation, they are an integral part of our website projects. Are you planning a website relaunch in 2026 and want to take AI visibility into account from the outset? Then get in touch with us today.